Security Risks of "OpenClaw things"

With great power comes great responsibility

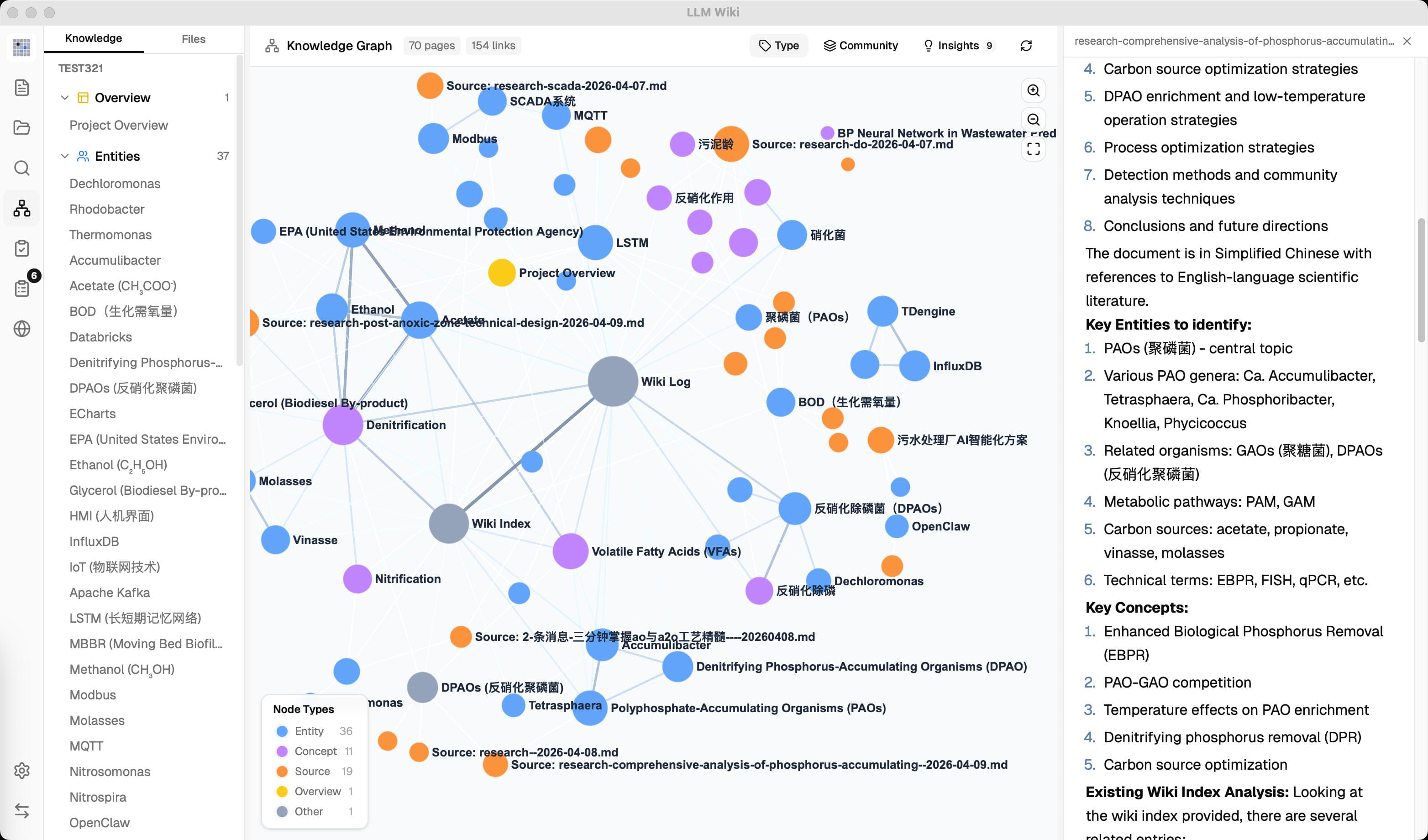

OpenClaw (also known as ClawdBot or MoltBot) is one of the fastest growing Open Source AI agent tools out there. It's more than a chatbot, it's a full agentic system that can read messages, browse the web, use tools, handle files, and act across connected services ...

... That's a lot of power. And with power comes risk ...

Right now, over 18,000 OpenClaw instances are exposed to the internet. Researchers found that nearly 15% of community-built skills contain malicious instructions (things like malware downloads or data theft). When these get taken down, they often pop back up under new names.

At a high level, OpenClaw has two main parts: a gateway that receives events and routes them, and an agent runtime that handles a turn, calls tools, stores state, and sends a reply. Because it can work across real systems, it should be treated like a high impact automation platform, not like a normal chat app.

Why it is powerful: It can connect to real channels, tools, browsers, files, and external systems.

Why it is risky: It can take action based on content that may be unsafe, misleading, or malicious.

Below are some "what could go wrong" scenarios that we discovered:

Prompt injection can trigger the wrong action

OpenClaw pulls content from untrusted places: emails, chat messages, web pages, webhooks ... If an attacker hides malicious instructions inside that content, the model might follow them and trigger tools with malicious intention like shell, browser, or file access without anyone realizing it.

The maintainers themselves say: don't trust the model. Protection has to come from auth, sandboxing, and approvals, not from hoping the model won't get tricked.

Threat Scenario: An attacker sends a crafted message to a Slack channel that OpenClaw monitors. The hidden instructions tell the agent to export its config and send credentials to an external server. If tool permissions are too broad, the agent just does it.

A bad skill or plugin can become a supply chain problem

OpenClaw lets you install community-built skills and plugins. The problem is: plugins run in process with the same OS privileges as OpenClaw itself. A bad plugin is basically code execution on your machine.

Researchers already found skills in the wild that download malware, steal data, and hide secrets. Cisco documented one that was "functionally malware", it silently sent files to an attacker's server. Trend Micro found skills distributing AMOS stealer on macOS.

Threat Scenario: You install a community skill that looks like a helpful scheduling tool. It quietly runs curl commands in the background and uploads your files to an external server.

The gateway becomes dangerous when exposure or auth is weak

The Gateway is OpenClaw's control plane. If it's exposed without proper auth, anyone in Internet can get operator-level access.

The official docs say: keep it loopback-only by default. If you put it behind a reverse proxy, make sure every route goes through that proxy. If even one path bypasses it, you're exposed.

Threat Scenario: An engineer puts OpenClaw behind a reverse proxy for convenience but misses a route. An attacker on the same network connects directly to the WebSocket and controls the agent: sending messages, running commands, browsing the web, all as the trusted operator.

One shared agent can be abused by the wrong person

OpenClaw can sit in a Slack workspace or Telegram group where many people can talk to it. The problem: everyone is talking to the same agent, with the same tool permissions.

Even if memory is separated per user, that doesn't make it a real authorization boundary. Anyone in the channel including external guests or compromised accounts can steer the agent's tools.

Threat Scenario: A company runs OpenClaw bot in Slack with access to internal files. A guest or compromised account sends a malicious request, and the bot reads sensitive documents or forwards data to another channel that attacker controlled.

Browser and node control can turn into remote operator access

OpenClaw can control a real browser. It can also pair with remote nodes that expose camera, screen recording, location, and canvas. The docs say it clearly: this is operator-level remote capability.

If the browser is logged into corporate email or cloud consoles, the agent can access all of that. And by default, private-network destinations are allowed unless you explicitly block them.

Threat Scenario: The agent browses using a profile that's already logged into your company's admin panel. A malicious prompt tells it to navigate there, extract data, or perform actions. The browser becomes a pivot point into internal services.

Broad shell and file access can lead to host compromise

OpenClaw's toolset includes shell execution, file read/write, session spawning, and patching. The docs warn against permissive settings and say that approval dialogs are just guardrails, not a real security boundary.

If a user enables broad tool profiles for convenience, a single malicious prompt can modify files, implant persistence, tamper with code, or spawn child sessions with even fewer restrictions.

Threat Scenario: A broad tool profile is enabled. A prompt injection causes the agent to modify local project files, add a backdoor, or spawn a less-restricted child session.

Secrets and transcripts can leak more than people expect

OpenClaw stores a lot of sensitive stuff under ~/.openclaw: Slack tokens, WhatsApp credentials, API keys, OAuth tokens, and conversation transcripts. If anyone or anything gets access to that folder, you've got a serious problem.

This doesn't even require remote code execution. A backup sync to a poorly-protected cloud folder, malware running under the same OS account, or even accidental sharing can expose everything.

Threat Scenario: The .openclaw directory gets synced to a cloud backup with public sharing. An attacker extracts Slack tokens, WhatsApp creds, and API keys, gaining persistent access to the victim's communications and connected services.

Memory or config poisoning can create quiet persistence

OpenClaw uses workspace memory files like MEMORY.md and SOUL.md to store long-lived instructions. A malicious skill can modify these files so that harmful behavior persists across sessions, even after the original malicious conversation is gone.

The agent continues to follow attacker defined instructions, and it looks like normal behavior. There's no obvious intrusion event. It just quietly keeps doing the wrong thing.

Threat Scenario: A malicious skill modifies MEMORY.md to include instructions that silently exfiltrate data or relax security policies. The next time the operator uses the assistant, the compromise looks like normal agent behavior.

Final thought

OpenClaw is powerful, and that's exactly the problem. It's not a chatbot that you can casually deploy. It's an agentic system that can touch your files, your browser, your shell, your messaging platforms, and your credentials, all at once.

If you're going to use it, treat it like what it is: a high-impact system that needs proper auth, tight tool policies, sandboxing, network isolation, and regular audits of every skill you install.

Don't trust the model. Don't trust community plugins. Don't assume approval dialogs are security boundaries. And definitely don't leave the Gateway open on the network.